Storage in a Seller’s Market: Flash Prices, Licensing Shifts, and Why IT Leaders Must Move Faster

- The NAND flash supply crunch and price escalation

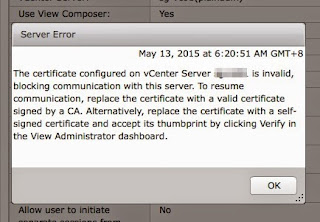

- Post-acquisition licensing shifts following Broadcom’s acquisition of VMware

The VMware Effect: When Licensing Strategy Becomes a Market Signal

Why Customers Felt Cornered

- Migration timelines: Platform assessments and migrations take years, not months.

- Application certification: Many production systems are certified only on VMware platforms.

- Compliance requirements: Running unsupported infrastructure is not acceptable in regulated environments.

- Operational risk: Downtime or instability costs far more than licensing increases.

Vendor strategy can change faster than your ability to migrate.

Vendor strategy can change faster than your ability to migrate.

NAND Flash Shortage: Déjà Vu With Higher Stakes

What’s Happening on the Ground

- Server and storage hardware price increases reported up to 70% in some cases.

- Quote validity shrinking from 30 days → 2 weeks → 24 hours.

- Vendors rationing supply and prioritizing high-value customers.

- Consumer impact likely to follow (laptops, phones, TVs, and smart devices)

Vendor Responses: Different Speeds, Same Direction

Rethinking Hyperconverged Infrastructure in a High-Cost Era

Hyperconverged infrastructure (HCI) has been a fantastic solution for simplifying operations and scaling predictably. But in today’s climate, it’s worth reassessing whether it remains the most cost-effective approach.

HCI tightly couples compute, storage, and memory—meaning scaling storage often requires buying more servers and expensive RAM, even when compute isn’t needed.

Vendors like Nutanix built their early momentum on a strong “No SAN” message—positioning HCI as the end of external storage. That message resonated in an era of falling flash prices and abundant hardware supply.

In a market where:

-

Server prices are rising

-

Memory costs remain high

-

Flash prices are surging

AI demanding large capacity and sustained performance

AI introduces a new challenge: large datasets, high-throughput pipelines, and GPU-driven processing require storage architectures that scale capacity and performance independently. In many cases, HCI struggles to meet these demands efficiently because:

- Scaling storage requires adding compute nodes

- GPU workloads need dense, high-performance shared storage

- Large AI datasets exceed the cost-effective limits of node-based scaling

We are seeing the industry narrative evolve. The strict “No SAN” stance is softening as customers demand greater flexibility, and the conversation increasingly includes external storage integration and converged models.

We’re seeing renewed interest in converged infrastructure—separating compute and storage—to allow:

- Independent scaling of storage without buying more servers

- Better cost control when flash prices spike

- High-performance shared storage better supports AI pipelines

- Flexibility to adopt hybrid storage tiers

HCI is still the right choice for many workloads. But the assumption that it is always the most efficient architecture no longer holds. Cost dynamics have changed.

Hybrid Strategies Beyond Tering: Cloud as a Pressure Value

Tiering between flash and HDD is one lever. Another increasingly relevant option is hybrid cloud as an interim capacity strategy.

When on-prem flash costs spike or supply tightens, the ability to extend workloads or datasets into the cloud—then migrate back when conditions stabilize—can provide critical flexibility.

This approach allows organizations to:

- Avoid locking into high on-prem flash costs during shortages

- Scale capacity temporarily without large capital outlays

- Maintain performance by placing the right workloads in the right location

- Repatriate data when flash pricing normalizes

The key is seamless mobility. Solutions that enable consistent data management, replication, and workload portability across on-prem and cloud environments become strategic—not optional.

Without this capability, hybrid cloud becomes a one-way door. With it, it becomes a financial and operational safety valve.

The Illusion of “Better Deals” in a Shortage

Option 1: New Supply Chains (e.g., Chinese Vendors)

- Support language and regional coverage

- Geopolitical and compliance risks

- Supply prioritization (domestic vs. international customers)

Option 2: Vendors Holding Prices

- How long can they absorb rising costs?

- Will delayed increases become more severe later?

- If they offer only flash, what happens when you must scale?

If your architecture has no HDD tier, your choices become binary: pay or stop growing.

Flash-Only Architectures: The New Lock-In Risk

That is a different kind of lock-in: economic lock-in.

Rethinking Optimization: Hybrid Is Back

Cost-Control Strategies Regaining Relevance

- Flash as cache, HDD as capacity tier

- Automated tiering between flash and disk

- Workload placement based on performance needs

- Shorter planning horizons (months, not years)

Trade-offs to Acknowledge

- HDD increases power usage and carbon footprint

- Performance tuning becomes more complex

- Not all workloads tier cleanly

Still, optimization today is about resilience, not elegance.

Procurement Must Evolve: Speed Is Now a Competitive Advantage

The Old Model

- Multi-month evaluations

- Extensive RFP cycles

- Year-long budget buffering (10–20%)

The New Reality

- Prices change in weeks—or days

- Supply disappears overnight

- Delayed decisions cost real money

This is not recklessness—it is operational agility.

Key Considerations to Avoid Future Lock-In

1. Architectural Flexibility

- Can you mix flash and HDD?

- Can workloads move across tiers?

2. Supply Chain Resilience

- Where do components originate?

- How does the vendor prioritize shortages?

3. Licensing and Commercial Model

- Are bundles forcing unwanted capabilities?

- What is the exit cost?

4. Scaling Economics

- What happens when you need 2× capacity?

- Are there lower-cost expansion paths?

5. Procurement Agility

- Can you approve purchases quickly?

- Are governance processes slowing response?

The Bigger Shift: From Buyer’s Market to Seller’s Market

- Vendors control supply.

- Licensing models consolidate value.

- Flash prices are rising.

- Alternatives require time most organizations don’t have.

Make decisive, informed choices that preserve optionality.

Make decisive, informed choices that preserve optionality.

If you’re seeing similar trends in your environment, I’d value your perspective. How are you adapting your storage architecture and procurement strategy in this new climate?

Comments